MeNToS : Tracklets Association with a Space-Time Memory Network

Robust Video Scene Understanding: Tracking and Video Segmentation (CVPR-W RVSU 2021)

@inproceedings{miah2021mentos,

author = {Miah, Mehdi and Bilodeau, Guillaume-Alexandre and Saunier, Nicolas},

title = {{MeNToS} : {Tracklets} {Association} with a {Space}-{Time} {Memory} {Network}},

year = {2021},

booktitle = {{CVPR-W RVSU}}

}

Mehdi Miah, Guillaume-Alexandre Bilodeau, Nicolas Saunier

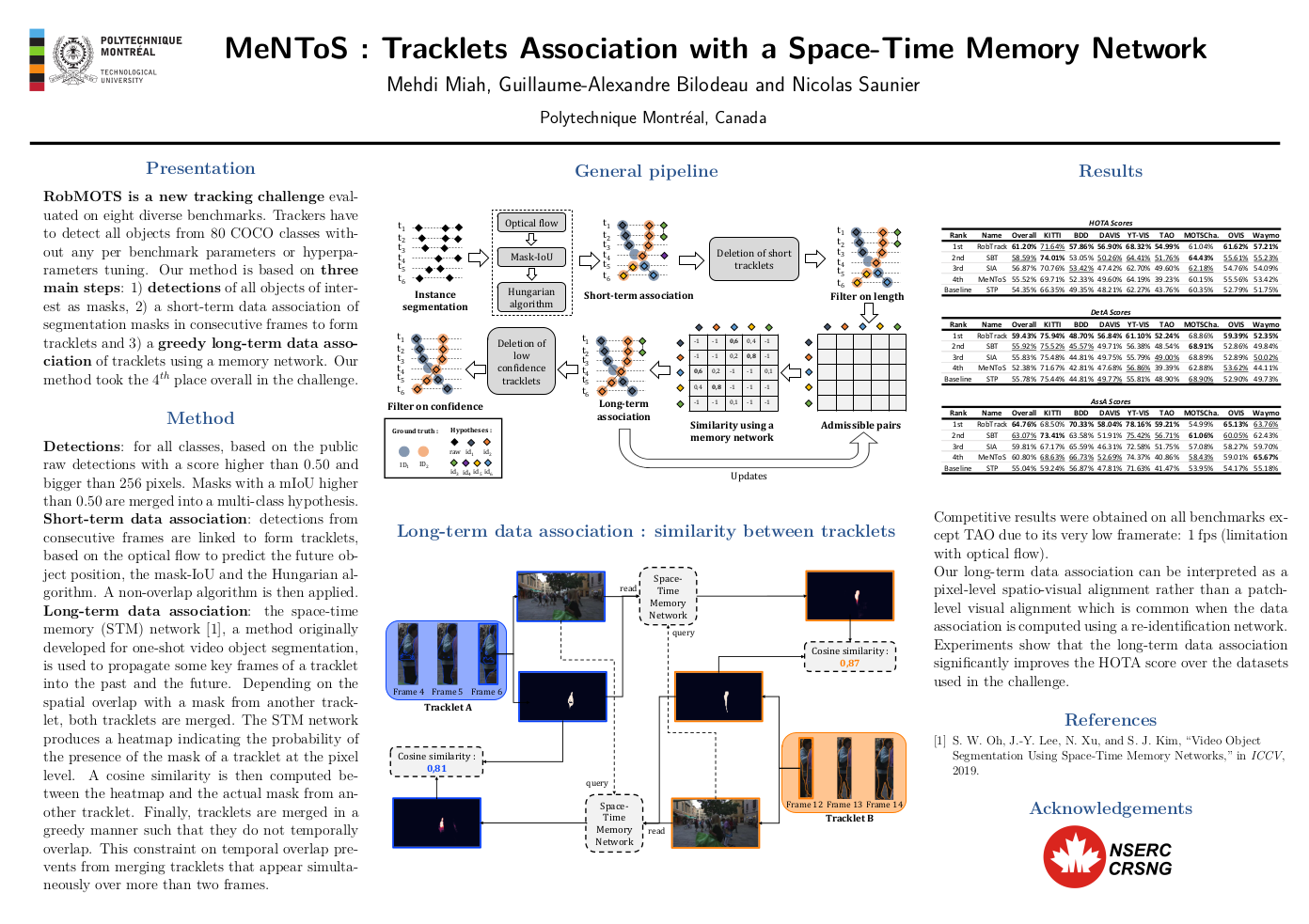

Abstract We propose a method for multi-object tracking and segmentation (MOTS) that does not require fine-tuning or per benchmark hyperparameter selection. The proposed method addresses particularly the data association problem. Indeed, the recently introduced HOTA metric, that has a better alignment with the human visual assessment by evenly balancing detections and associations quality, has shown that improvements are still needed for data association. After creating tracklets using instance segmentation and optical flow, the proposed method relies on a space-time memory network (STM) developed for one-shot video object segmentation to improve the association of tracklets with temporal gaps. To the best of our knowledge, our method, named MeNToS, is the first to use the STM network to track object masks for MOTS. We took the 4th place in the RobMOTS challenge.

| Acknowledgements |

| We acknowledge the support of the Natural Sciences and Engineering Research Council of Canada (NSERC), [DGDND-2020-04633 and DG individual 06115-2017]. |